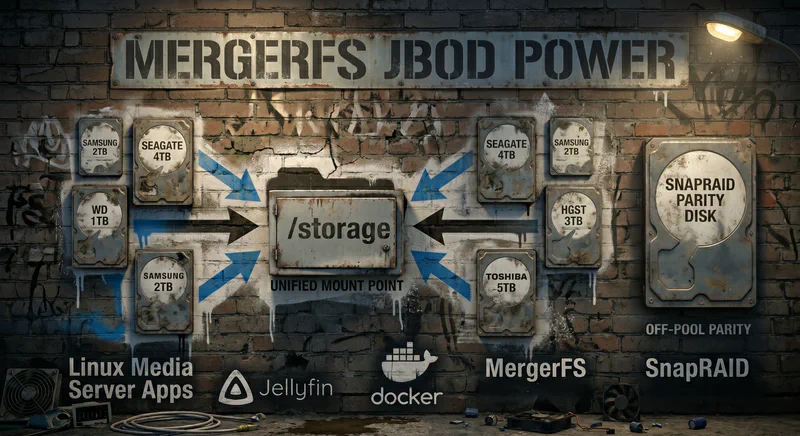

If you have ever stared at six mismatched hard drives of different sizes and wished there was a way to smash them into one big folder without buying into the ZFS religion, MergerFS is the answer. It pools whatever junk you have lying around and presents it as a single mount point that Jellyfin, Sonarr, and Radarr treat like one giant disk. When you build your own NAS storage for media, this is the path that scales without buying 8 matched drive you don’t need. Especially now with HDD prices through the roof.

This 2026 guide walks through what MergerFS actually does, why it beats ZFS for a movie/TV library, the creation policies that matter (mfs, lfs, epff, epmfs, pfrd), how to bolt SnapRAID on for parity, and exactly what to put in fstab so your NAS will boot cleanly even when one disk is misbehaving.

category.create=mfs for a balanced media pool, epmfs if you organize libraries by top-level folder, and always set minfreespace plus allow_other before pointing Docker at it.

I set this up on an and old gamming rig I had in the closet and through in four 18TB, two 24TB, drives plus a 4TB WD Red I refused to get rid of. The same fstab line has survived two drive swaps and several kernel upgrades without me having to do anything. Everything below is what I ran on that box, with the gotchas that actually bit me called out under the “Tips and Warning” boxes.

What MergerFS Actually Is (And Why It Is Not RAID)

MergerFS is a FUSE-based union filesystem written by Antonio “trapexit” Musumeci. It takes a list of source directories (usually individual drives mounted at /mnt/disk1, /mnt/disk2, etc.) and presents them as one merged directory tree at a target mount like /mnt/storage. The drives keep their own filesystems (ext4, XFS, ZFS, Btrfs, whatever), keep their own data, and stay independently readable if MergerFS ever disappears. Pull a drive out, slap it into a USB dock on a different machine, and your files are right there in plain folders.

That last point is why it eats traditional RAID and ZFS for lunch in a media server context.

The case against RAID for a movie library

Traditional RAID (RAID5, RAID6, RAIDZ) treats your drives as one striped volume. The wins are real: parallel read speed, transparent fault tolerance, and a single namespace. The losses are also real, and they hit homelabs harder than they hit datacenters:

- Same-batch failure risk: drives bought together tend to die together. A second failure during a multi-day RAID5 rebuild on 14TB+ disks is a good way to lose everything.

- No mismatched sizes: most arrays force the smallest drive’s capacity on every member. Your 14TB sits there pretending to be 4TB.

- Expansion is painful: adding a drive usually means a full reshape or a brand-new vdev.

- Total loss on catastrophic failure: lose enough drives in the wrong combination and the entire array is gone, not the files on the dead disks alone.

MergerFS sidesteps all four. Each drive is its own filesystem with its own files. If a drive dies, you lose only what was on that drive, not the pool. Mismatched sizes are the design, not a workaround. Adding storage is “format new drive, add it to the wildcard, remount.” That last property is why the unraid and jbod school of media servers exists at all: pooling without striping, so growth and failure are both easy on your sanity and wallet.

The tradeoff is honest. MergerFS does not give you striped read speeds. It does not transparently survive a drive failure on its own (you need SnapRAID or backups for that). Small random writes are slower than a native filesystem because they go through FUSE. For a media server where files are huge, written once, and read sequentially, none of those matter. For a database or a VM datastore, they matter a lot. Use the right tool.

Prerequisites and Drive Prep

Before MergerFS does anything useful, your drives need to be partitioned, formatted, and mounted individually. The pool is a view. The data lives on the source filesystems. You should be comfortable running commands as root and editing /etc/fstab. If sudo and vim /etc/fstab make you nervous, fix that first.

0. Check drive health before you touch it

Look. If you are adding shucked drives, recertified drives, drives from eBay, or anything that has been sitting in a closet, run a health check first. Formatting a dying disk is a great way to lose hours and discover the problem at 80% of a SnapRAID sync.

Install smartmontools and run a short test on each drive:

sudo apt install smartmontools

sudo smartctl -t short /dev/sdb

To see the progress and review the results:

sudo smartctl -l selftest /dev/sdb

sudo smartctl -a /dev/sdb

You want PASSED under the SMART overall-health status, low values for Reallocated_Sector_Ct, Current_Pending_Sector, and Offline_Uncorrectable, and a power-on hours count that matches what the seller advertised. If anything looks ugly, run a long self-test (sudo smartctl -t long /dev/sdb, then check back in a few hours with sudo smartctl -l selftest /dev/sdb) before committing the disk to the pool.

1. Install MergerFS from upstream, not apt

The Ubuntu/Debian repository version lags the upstream release by months and is missing fixes that matter (the noforget and inodecalc improvements, in particular). Pull the latest .deb from the GitHub releases page GitHub mergerfs releases:

wget https://github.com/trapexit/mergerfs/releases/download/2.42.0/mergerfs_2.42.0.debian-trixie_amd64.deb

sudo dpkg -i mergerfs_2.42.0.debian-trixie_amd64.deb

mergerfs --version

Substitute trixie for bookworm/forky/etc. depending on your distro. Verify the version is current (2.42.0 at the time of writing).

2. Partition and format each drive

You can use ext4 for media drives because it is boring, well-supported, and resizes cleanly. I use XFS, I find it offers slightly better large-file performance. Avoid ZFS on individual MergerFS branches unless you specifically want ZFS features, because the FUSE layer eats most of the benefits.

sudo parted /dev/sdb -- mklabel gpt

sudo parted /dev/sdb -- mkpart primary ext4 0% 100%

sudo mkfs.ext4 -L disk1 /dev/sdb1

sudo mkdir -p /mnt/disk1

Repeat for each disk, incrementing the label and mount point. Add each one to /etc/fstab by UUID:

sudo blkid /dev/sdb1

# UUID="abc123..." TYPE="ext4"

If blkid returns nothing, the partition table did not get written. Re-run the parted commands and check lsblk to confirm /dev/sdb1 exists before formatting.

UUID=abc123... /mnt/disk1 ext4 defaults,noatime,nofail 0 2

UUID=def456... /mnt/disk2 ext4 defaults,noatime,nofail 0 2

UUID=ghi789... /mnt/disk3 ext4 defaults,noatime,nofail 0 2

The nofail is non-negotiable. Without it, a single failed disk will drop your server into emergency mode at boot. Reboot and run df -h to confirm every disk shows up before going further.

3. Decide on a layout

Two layouts dominate. Pick one before you copy data, because changing later is annoying.

- Flat pool: every disk has the same top-level structure (or no structure at all) and MergerFS distributes new files across them by free space. Use

category.create=mfs. Simplest, works for everyone. - Path-aware pool: every disk has the same top-level folder names (

/mnt/disk1/movies/,/mnt/disk1/tv/,/mnt/disk2/movies/,/mnt/disk2/tv/) and MergerFS routes new files to the disk that already has the matching path. Usecategory.create=epmfs. Better for “I want all of Breaking Bad on one disk so a failure only loses Breaking Bad” thinking.

I run mfs for my library.

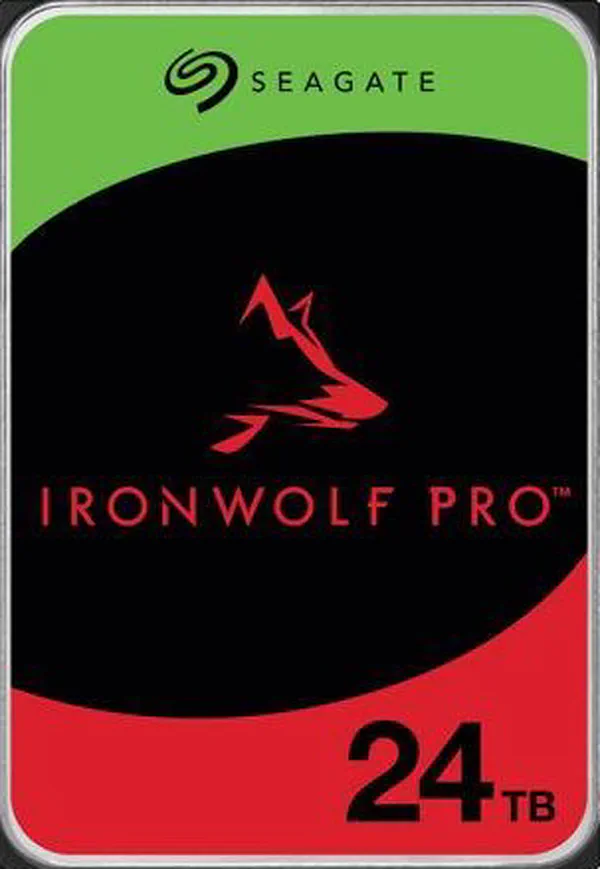

A pool of identical drives is not the goal here. But if you are starting fresh and want to seed a multi-bay build with one or two big disks you can grow around, this is the slot they fit into.

The Policy Cheat Sheet (mfs vs lfs vs epff vs epmfs vs pfrd)

This is the part of MergerFS that confuses everyone. There are policies for create, search, and action operations. For media servers, you almost always only care about category.create. The relevant create policies, in plain English:

- mfs (most free space): write the new file to the branch with the most free space. Best general-purpose pick. Spreads writes evenly so disks fill at roughly the same rate.

- lfs (least free space): write to the branch with the least free space (that still has room). Fills disks one at a time. Useful if you want most of your drives spun down most of the time, at the cost of zero balancing.

- epff (existing path, first found): only write to a branch that already has the parent path; pick the first one found. Path-aware but order-dependent.

- epmfs (existing path, most free space): only write to a branch that already has the parent path; pick the one with the most free space among those. Path-aware and balanced. The right pick for organized libraries.

- pfrd (proportional fill, random distribution): weighted random pick by percentage free. The default in current MergerFS releases. Statistically balances drives without the “always picks the same disk” failure mode that mfs can have when sizes are mismatched [trapexit docs].

For the build-your-own-NAS-storage crowd: start with mfs if your library is flat, epmfs if you organize by /movies, /tv, /music folders, and only deviate if you have a specific reason. Ignore lfs unless you have actually measured the spin-up cost on your hardware.

epff with a single existing path on disk1. Switched to mfs and balance restored itself within a few weeks of new downloads. If you do not need path stickiness, do not opt into it.

fstab Configuration That Actually Survives Reboots

The fstab line is where most first-time MergerFS setups break. Here is the line I run on Debian 13, broken down option by option:

# List EVERY source disk in x-systemd.after.

/mnt/disk* /mnt/storage fuse.mergerfs defaults,allow_other,inodecalc=path-hash,cache.files=off,dropcacheonclose=true,category.create=epmfs,minfreespace=50G,fsname=mergerfs,nofail,x-systemd.after=/mnt/disk1,x-systemd.after=/mnt/disk2,x-systemd.after=/mnt/disk3 0 0

/mnt/disk*(the source): glob expansion picks up every numbered disk mount. Add a new disk by mounting it at/mnt/disk4.defaults: standard mount options.allow_other: lets users other than root see the pool. Required for Docker containers, Jellyfin, Sonarr, Radarr, Samba, NFS, and pretty much anything useful. Without it your apps see an empty directory.inodecalc=path-hash: hashes the relative path of the entry in question. This means the inode value will always be the same for that file path.cache.files=off+dropcacheonclose=true: disables FUSE page caching and drops kernel cache when files close. Counter-intuitive but correct for media. The kernel already caches reads from the underlying filesystems, and the FUSE cache layer mostly causes stale-data bugs in apps that mmap files (Jellyfin’s metadata scanner is one).category.create=epmfs: see the policy section above.minfreespace=50G: refuse to write to a branch with less than 50GB free. Stops MergerFS from cramming the last 200MB onto a nearly-full disk and producing ENOSPC errors mid-import.fsname=mergerfs: cosmetic, makesdf -hshowmergerfsinstead of a random path.nofail: do not block boot if the source disks are not ready.x-systemd.after=/mnt/diskN: tells systemd to wait for those source mounts before trying the pool. Without this, on slow-spinning HDD systems, fstab tries to mount the pool before the disks are mounted, and you get a ghost empty pool. List every disk in the pool.

After editing /etc/fstab:

sudo systemctl daemon-reload

sudo mount -a

df -h /mnt/storage

You should see the combined size of all source disks. Write a test file, then check which physical disk it landed on:

echo "test" > /mnt/storage/hello.txt

ls -la /mnt/disk*/hello.txt

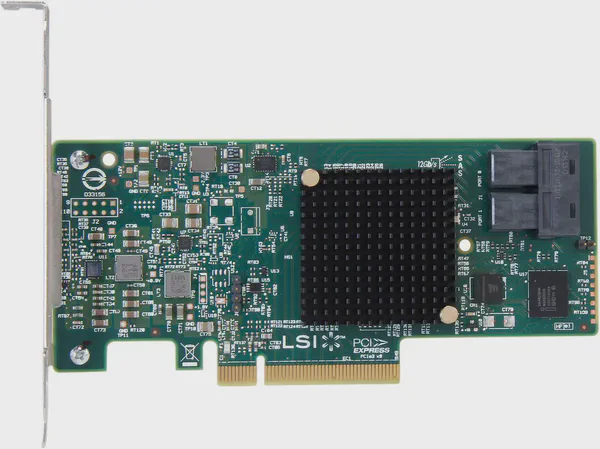

Once the pool mounts cleanly, the next thing you will care about is what is actually under the hood. If you are running drives off motherboard SATA today, you will hit the port count ceiling fast. The fix is an HBA in IT mode.

With the HBA sorted, the fstab line above is portable: the source glob does not care whether the drives hang off motherboard SATA or an LSI card.

Performance Tuning for Jellyfin, Sonarr, and Radarr

The defaults above are tuned for media. A few extra tweaks help when you are pushing 4K remuxes around.

Permissions and ownership

Pick a UID/GID for your media stack and use it everywhere. I use media:media (UID 1000, GID 1000) on the host, mapped through every Docker container with PUID=1000/PGID=1000. Then chown the pool:

sudo chown -R media:media /mnt/storage

sudo chmod -R 775 /mnt/storage

Mismatched UIDs between host and containers are the number-one cause of “Sonarr can see the file but Jellyfin cannot play it.” Pick one and stick with it.

Optional SSD cache branch

MergerFS does not have a true write-back cache, but you can fake one with a “tiered cache” pattern. Mount an SSD at /mnt/cache, build a second pool that prefers the SSD for new writes, then rsync nightly from cache to spinning drives. The community tool mergerfs.cache.tool (from the trapexit/mergerfs-tools repo) automates the move.

# Two pools: one for writes (SSD-first), one for reads (everything).

/mnt/cache:/mnt/disk* /mnt/writes fuse.mergerfs defaults,allow_other,use_ino,category.create=ff,minfreespace=20G,fsname=mergerfs-writes,nofail 0 0

/mnt/cache:/mnt/disk* /mnt/storage fuse.mergerfs defaults,allow_other,use_ino,category.create=epmfs,minfreespace=50G,fsname=mergerfs,nofail 0 0

Then schedule the move with cron:

# /etc/cron.d/mergerfs-cache

0 3 * * * root /usr/bin/mergerfs.cache.tool -m /mnt/cache -p /mnt/disk -t 80 >> /var/log/mergerfs-cache.log 2>&1

The -t 80 flag tells the tool to drain the cache when it crosses 80% full. Flag names and behavior have shifted across mergerfs-tools releases, so run mergerfs.cache.tool --help against your installed build before trusting that exact line. For most homelabs this is over-engineering. For a server that ingests a lot of new content during the day and wants the spinning disks parked, it earns its keep.

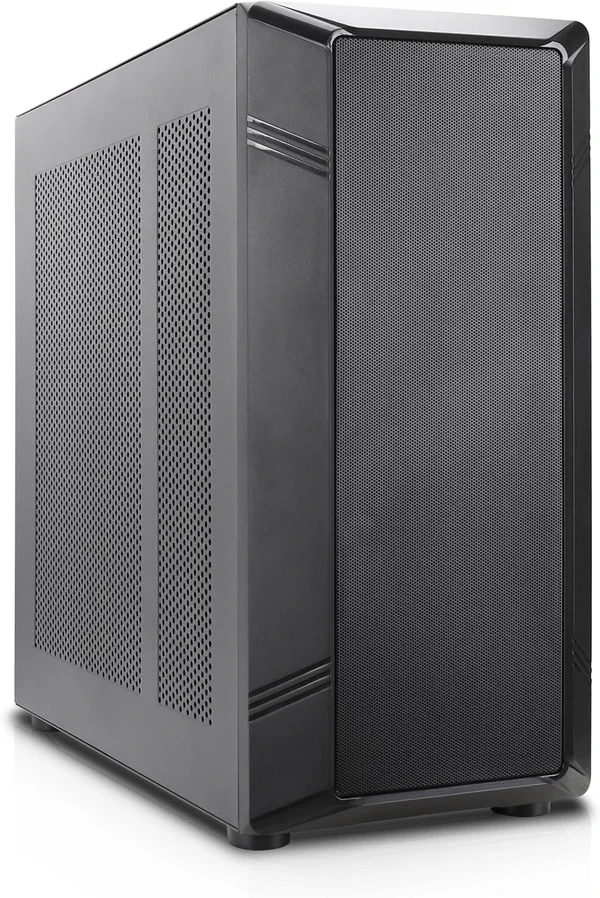

If you are still planning the chassis, port count and drive bays drive every later decision. An eight-bay case with room for an HBA gives you headroom for two or three drive generations of growth.

SnapRAID: The Parity Layer MergerFS Does Not Provide

MergerFS by itself protects you from nothing. Lose a disk, lose its files. SnapRAID adds parity, checksums, and silent-corruption detection on a schedule, which fits write-once-read-many media perfectly.

Install:

sudo apt install snapraid

Configure /etc/snapraid.conf:

# Parity disk(s): must be at least as large as your largest data disk.

# NOT part of the MergerFS pool.

parity /mnt/parity1/snapraid.parity

# Content files: SnapRAID writes the file list and checksums here.

# Keep one copy off the data disks plus one on each data disk for redundancy.

# If a data disk dies, you still have a content file on the surviving disks.

content /var/snapraid/snapraid.content

content /mnt/disk1/snapraid.content

content /mnt/disk2/snapraid.content

# Data disks: point to the underlying mounts, NOT the pool path.

# SnapRAID needs the raw filesystem to compute parity correctly.

data d1 /mnt/disk1

data d2 /mnt/disk2

data d3 /mnt/disk3

# Excludes: skip transient files and the downloads branch.

exclude *.unrecoverable

exclude /tmp/

exclude /lost+found/

exclude downloads/

The parity disk is not part of the MergerFS pool. It sits separately, dedicated to parity, and must be at least as large as your largest data disk. The data d1 /mnt/disk1 lines point to the source disks, not the pool, because SnapRAID needs to see the underlying filesystem to compute parity correctly.

Initial sync:

sudo snapraid sync

This builds the parity file. On a multi-TB library, expect this to take many hours. Schedule a regular sync + scrub via cron or a systemd timer. The community wrapper snapraid-runner (Python) handles “sync, then scrub a percentage, then email me” with one config file. That is what I run nightly.

When a drive dies, you replace it, mount the new disk at the same path, and run snapraid fix -d d2 to rebuild only the dead disk’s contents. No array reshape. No rebuild stress on the surviving disks.

snapraid sync after big imports. The cron job is for steady-state, not for the night you dumped a 4TB archive onto the pool.

Upgrading and Replacing Drives Without Rebuilds

This is the killer feature, and it is genuinely as simple as advertised.

Adding a new drive

# 0. Check the new drive's health before you trust it

sudo smartctl -a /dev/sde

# 1. Partition, format, mount

sudo parted /dev/sde -- mklabel gpt mkpart primary ext4 0% 100%

sudo mkfs.ext4 -L disk5 /dev/sde1

sudo mkdir /mnt/disk5

sudo blkid /dev/sde1 # grab UUID

# 2. Add to fstab (same pattern as the other disks)

echo "UUID=newuuid /mnt/disk5 ext4 defaults,noatime,nofail 0 2" | sudo tee -a /etc/fstab

sudo mount /mnt/disk5

# 3. If using epmfs, mirror your top-level folders so files route correctly

sudo mkdir -p /mnt/disk5/{movies,tv,music}

sudo chown media:media /mnt/disk5/{movies,tv,music}

# 4. Remount the pool to pick up the new branch

sudo umount /mnt/storage

sudo mount /mnt/storage

# 5. Add it to snapraid.conf as data d5 /mnt/disk5, then sync

sudo snapraid sync

Total downtime: a few seconds during the umount/mount. New writes start landing on the new drive immediately because it has the most free space.

Replacing a failing drive

If smartctl is screaming and the disk is still readable:

# 1. Mount new disk at /mnt/disk2-new

# 2. rsync the dying disk to it

sudo rsync -aHAX --info=progress2 /mnt/disk2/ /mnt/disk2-new/

# 3. Unmount old, mount new at the same path

# 4. Update fstab UUID, sudo mount /mnt/disk2

# 5. snapraid sync (parity is unchanged because data is the same)

If the disk is dead and unreadable, skip the rsync and let SnapRAID rebuild instead:

# 1. Replace physical disk, format, mount at /mnt/disk2

# 2. Run snapraid fix to rebuild that disk's contents from parity

sudo snapraid -d d2 -l fix.log fix

sudo snapraid -d d2 -l check.log check

sudo snapraid sync

This is the moment when SnapRAID earns its keep. Without it, those files are gone.

One more piece you will want before you start swapping drives in earnest: the right cable between your HBA and the bays.

Troubleshooting Common MergerFS Failures

“Permission denied” or “no such file” from Docker

You forgot allow_other. Add it to the fstab options, remount, restart the container. If you still see denials, check that the container’s PUID/PGID match the owner of files in the pool.

Pool shows as empty after reboot

The pool mounted before the source disks did. Add x-systemd.after=/mnt/diskN for every required disk to the fstab options, run systemctl daemon-reload, reboot to verify. If you only listed disk1 and disk1 is the slow or dead one, you will reproduce the bug.

df shows weird sizes

If your pool is at /mnt/storage and a source disk is also visible inside the pool path (a common typo: /mnt/disk* matching /mnt/disk-old-backup), you will see double-counted space. Make sure your wildcard only matches active source disks.

Slow writes on large files

Try direct_io,noforget instead of cache.files=off,dropcacheonclose=true. direct_io bypasses the page cache entirely on the FUSE side, which on modern drives (200MB/s+ sequential) is usually a wash or slight win. noforget reduces inode churn.

“Stale file handle” after long-running operations

Almost always a use_ino issue. Make sure it is set. If the problem persists, check that the underlying filesystems all support consistent inodes (ext4, XFS yes; some FAT variants no).

SnapRAID sync warns about too many changed files

SnapRAID has a default threshold for how many deletes/moves it will accept without a --force-empty or similar flag. If you reorganized your library, this is correct, paranoid behavior. Read the warning, confirm it matches what you actually did, then run with the suggested flag.

Frequently Asked Questions

➤ How do I add a new drive to MergerFS without losing data or rebuilding?

➤ Should I use mfs or epmfs for a media server with separate movies and TV folders?

➤ Does MergerFS work with existing data on drives, and how does it handle mismatched sizes?

➤ Why pair MergerFS with SnapRAID instead of using ZFS or RAID?

➤ What happens if a drive fails in MergerFS, do I lose everything like RAID5?

Wrapping Up

MergerFS solves a specific problem: pooling commodity drives into one mount for a Linux media server, without inheriting RAID’s brittleness or buying matched-drive sets you do not need. Pair it with SnapRAID for parity, set category.create=mfs or epmfs based on your library layout, lock down the fstab options (allow_other, use_ino, cache.files=off, minfreespace=50G, nofail, and an x-systemd.requires entry per source disk), and use the same UID/GID across host and containers. That stack will outlive several drive generations.

Next steps if you are building this out:

- Read trapexit’s official docs at GitHub mergerfs releases for the full list of policies and runtime options.

- For ongoing health monitoring, run

smartctlchecks on each source disk via a weekly cron and asnapraid scrubon 5-10% of the pool nightly.

Build your own NAS storage that grows with you, not against you. The first time you swap a 4TB for a 14TB without anything noticing, you will understand why the unraid and jbod approach has stuck around.